While augmented reality applications offer normal users an enhanced, live view of a physical, real-world environment, the computer-generated sensory input thus generated is, however, useless for people who can’t see. Now aiming to make it equally advantageous for blind people, Suranga Nanayakkara from Singapore University of Technology and Design, PhD student Roy Shilkrot and Pattie Maes from the Fluid Interfaces Group have created a finger-worn device that converts images into aural feedback.

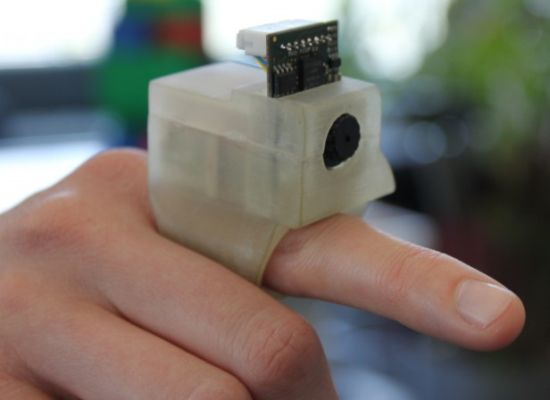

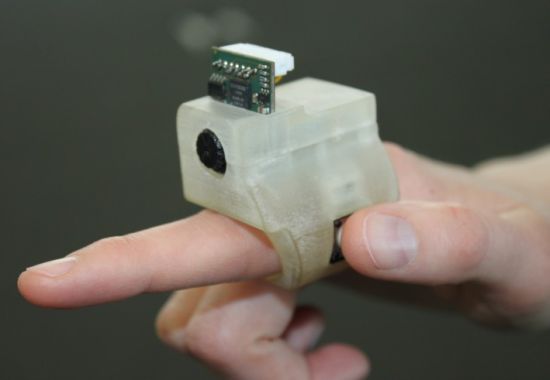

Dubbed as EyeRing, the device comprises of a small VGA camera, a Bluetooth radio module, a 16 MHz AVR processor and a 3.7V Li-ion battery, neatly enclosed in a 3D-printed ABS nylon outer housing. Other components include a mini-USB port, a power on/off switch and a thumb-activated button. While the mini-USB port serves the dual purpose of charging battery and reprogramming the unit, the thumb-activated button ensures nifty execution of commands.

After identifying an object via the ring’s camera, what a user needs to do is provide verbal command through a microphone and then click the photograph. Next, this photo is sent to a Bluetooth-linked smartphone which relies on an Android app to analyze the image and announce the results through earphone. The prototype EyeRing system can help users correctly identify currency, text, pricing information on tags and colors. Another use may include cane-free navigation.

Via: Gizmag